Learning Generative Structure Prior for Blind Text Image Super-resolution

CVPR 2023

Paper

Abstract

Blind text image super-resolution (SR) is challenging as one needs to cope with diverse font styles and unknown degradation. To address the problem, existing methods perform character recognition in parallel to regularize the SR task, either through a loss constraint or intermediate feature condition. Nonetheless, the high-level prior could still fail when encountering severe degradation. The problem is further compounded given characters of complex structures, e.g., Chinese characters that combine multiple pictographic or ideographic symbols into a single character. In this work, we present a novel prior that focuses more on the character structure. In particular, we learn to encapsulate rich and diverse structures in a StyleGAN and exploit such generative structure priors for restoration. To restrict the generative space of StyleGAN so that it obeys the structure of characters yet remains flexible in handling different font styles, we store the discrete features for each character in a codebook. The code subsequently drives the StyleGAN to generate high-resolution structural details to aid text SR. Compared to priors based on character recognition, the proposed structure prior exerts stronger character-specific guidance to restore faithful and precise strokes of a designated character. Extensive experiments on synthetic and real datasets demonstrate the compelling performance of the proposed generative structure prior in facilitating robust text SR.

EMbedding generAtive stRuCture priOr for blind Chiese Text SR (MARCONet)

Framework

MARCONet

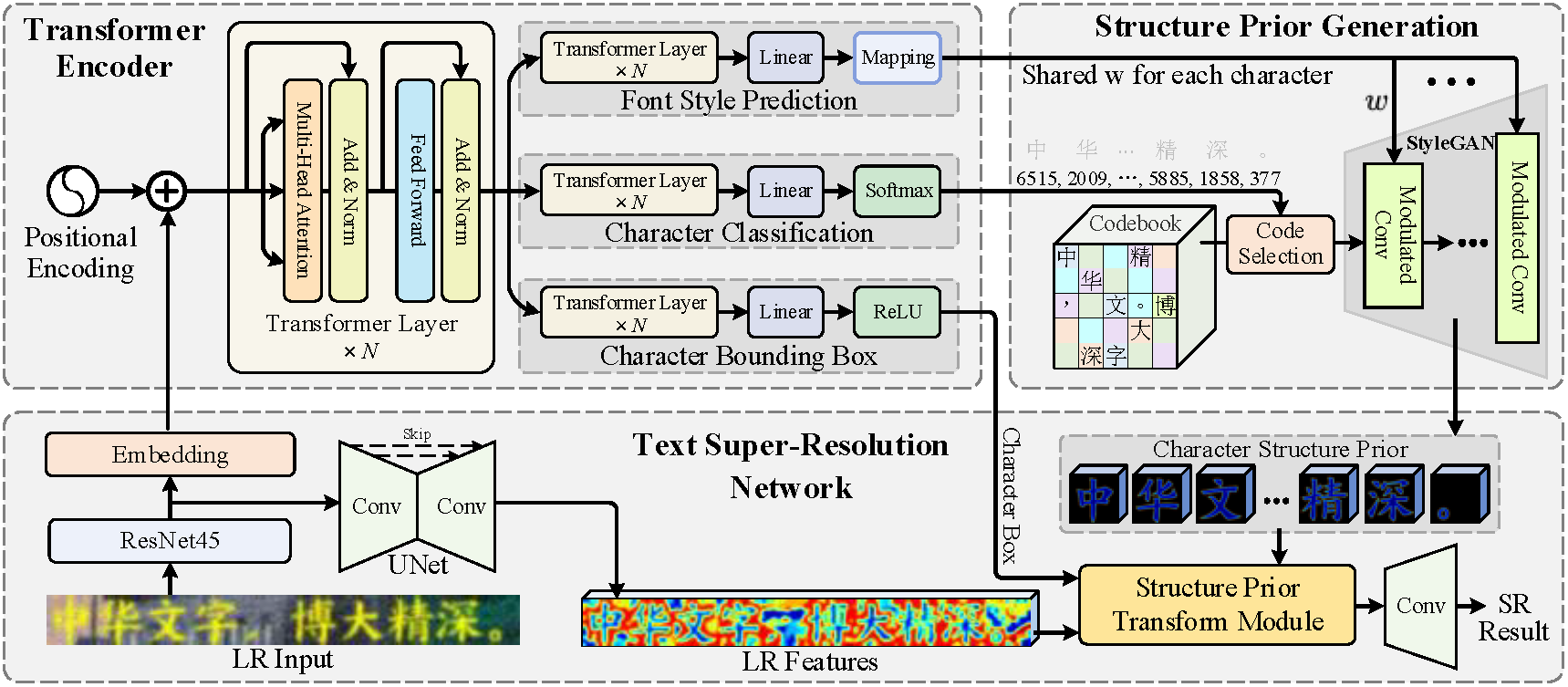

Overview of our MARCONet. It contains three parts, i.e., (i) Transformer encoder for predicting the font style, classification and bounding boxes of each character from LR input, (ii) structure prior generation with pre-trained StyleGAN for generating reliable structure prior for each character, and (iii) the SR process for reconstructing the SR output with the incorporation of each characters' structure prior.

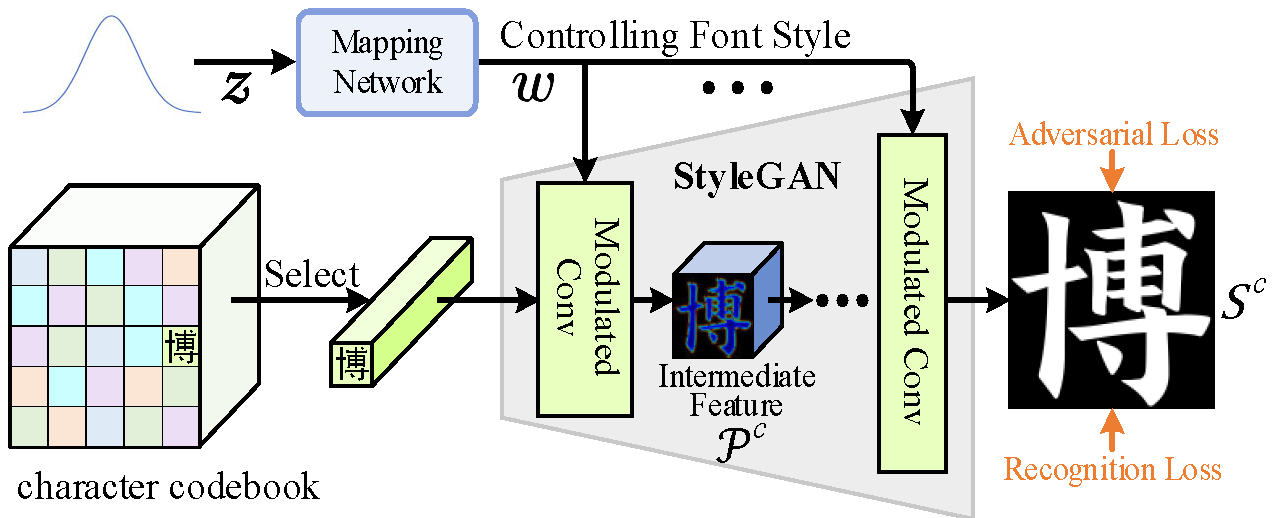

Pre-training generative structure prior for each character. The codebook stores the discrete code of each character, and each code serves as a constant to StyleGAN for generating a specific high-resolution character. The intermediate features encapsulate the generative structure prior and will be used for guiding text SR.

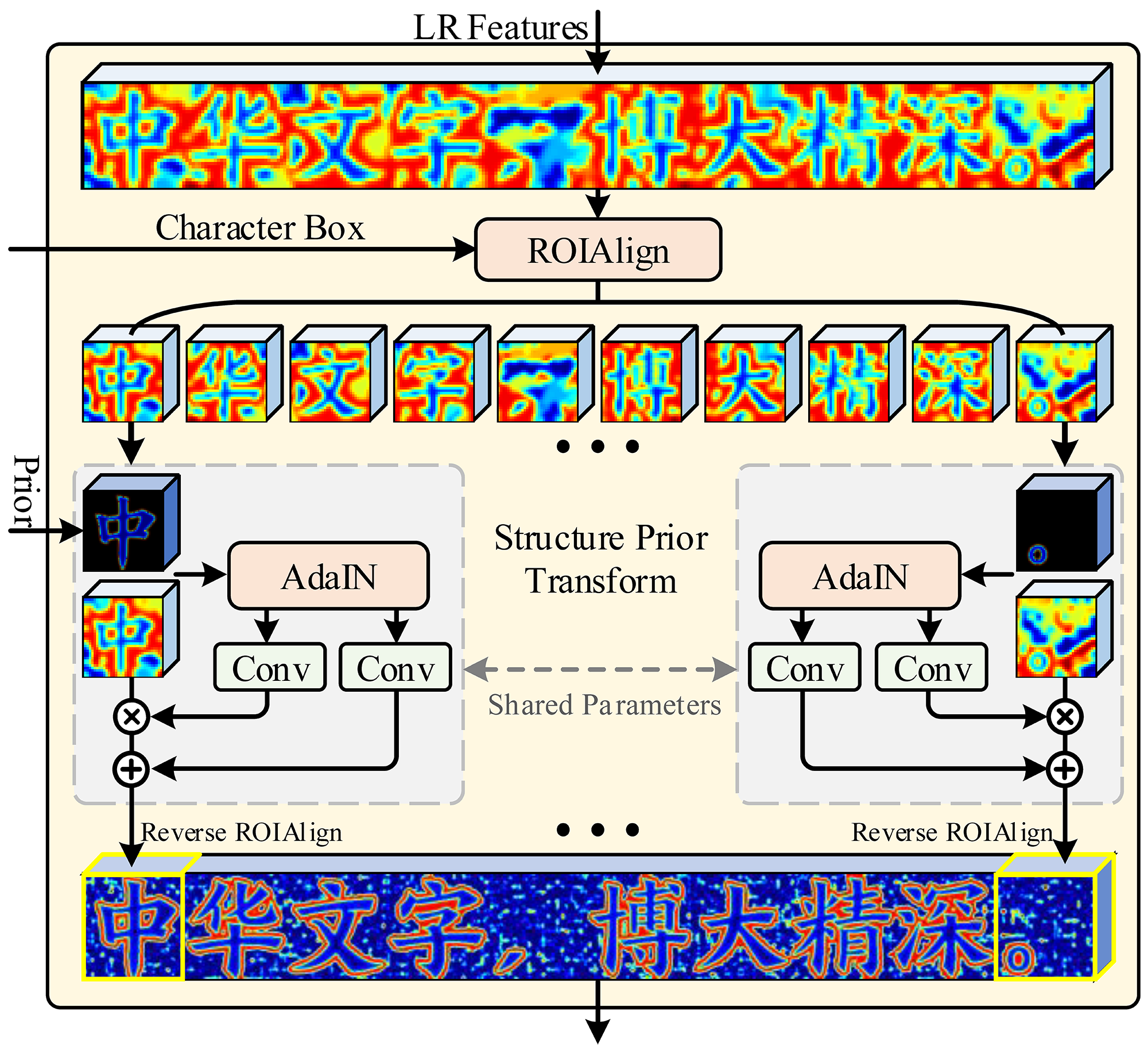

Structure prior transform module. Each LR character is cropped and aligned by using the bounding boxes, and is super-resolved with the guidance of their structure prior.

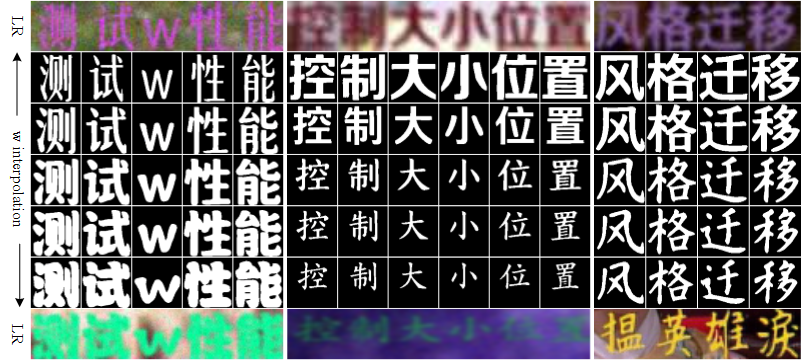

The W Space

Controls the Font Style

The 1-st and 7-th rows are LR input. The 2-nd and 6-th rows are output of StyleGAN with w and code indexes from their LR input. The remaining rows are the interpolation results of w from two LR inputs.

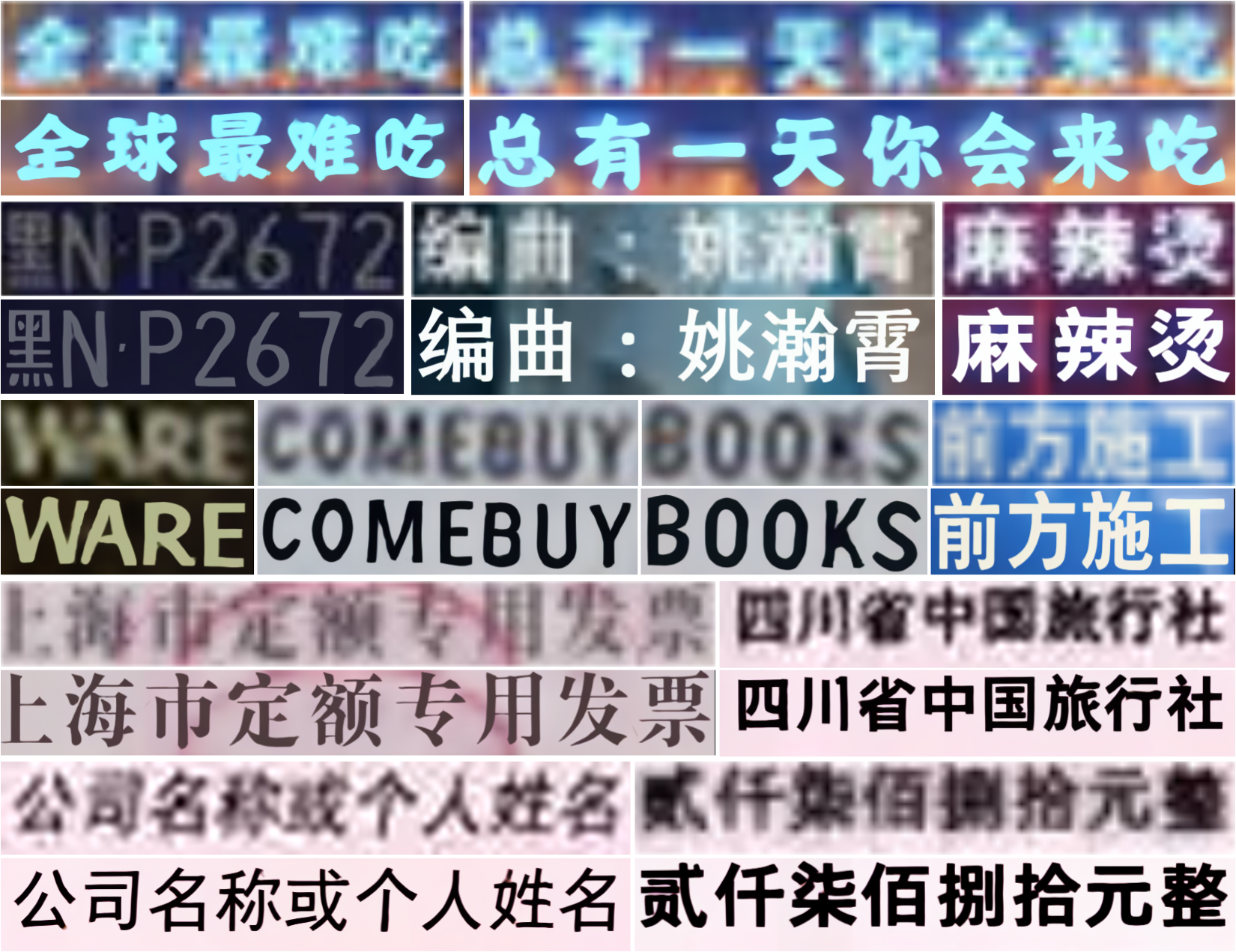

Real-world Chinese Text Image

Super-resolution

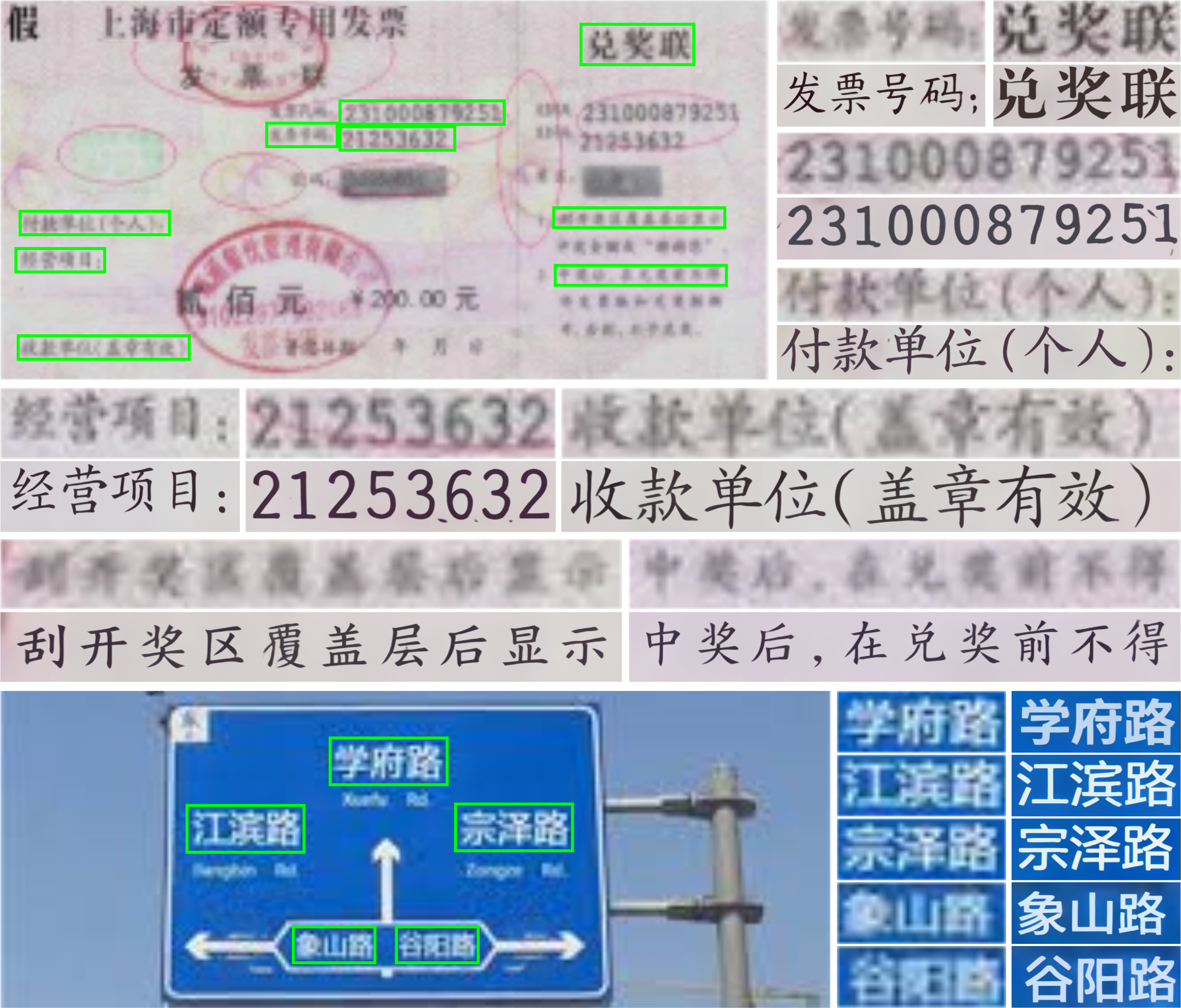

More super-resolution results on the segments of Chinese text image cropped from a real low resolution images, including invoices, license plates, road signs, plaques and video captions.

Paper

Citation

@InProceedings{li2023marconet,

author = {Li, Xiaoming and Zuo, Wangmeng and Loy, Chen Change},

title = {Learning Generative Structure Prior for Blind Text Image Super-resolution},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

year = {2023}

}