Our Research

Research

MMLab@NTU

MMLab@NTU advances computer vision and multimodal AI by building models that perceive, reason, generate, and interact with the visual world. Our recent work spans foundation models for vision and vision-language understanding, controllable image and video generation, spatial intelligence and 3D content creation, efficient generative systems, and embodied AI.

Multimodal Foundation Models

We study foundation models that unify perception and generation across images, video, language, and structured visual representations. Our work explores how visual representations can be aligned, adapted, and composed for open-world understanding, dense prediction, promptable segmentation, and multimodal reasoning. We aim to build general-purpose models that transfer across tasks rather than being optimized for narrow benchmarks.

Agentic Multimodal AI

We study multimodal systems that do more than perceive: they remember, plan, reflect, and act. Our work explores long-context memory, world modeling, planning-oriented generation, multimodal grounding, self-reflective reasoning, and interactive agents that can use visual and linguistic context to solve extended tasks. We are interested in building agentic systems that operate coherently over time, integrate external tools and structured memory, and support decision-making in complex environments.

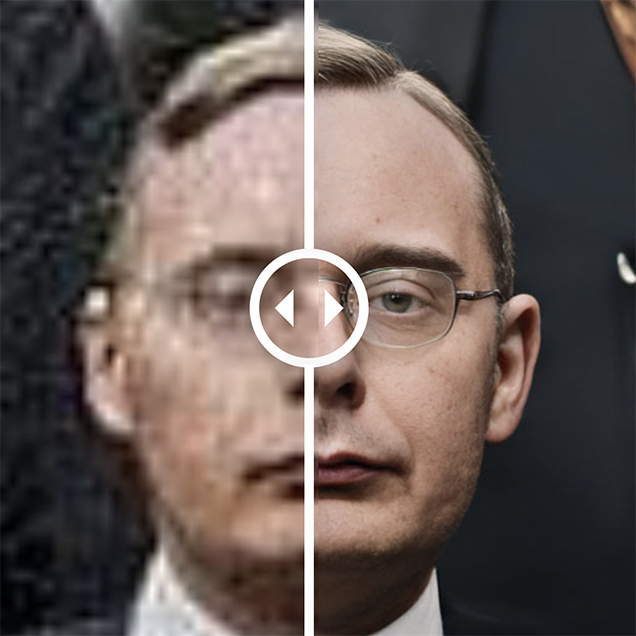

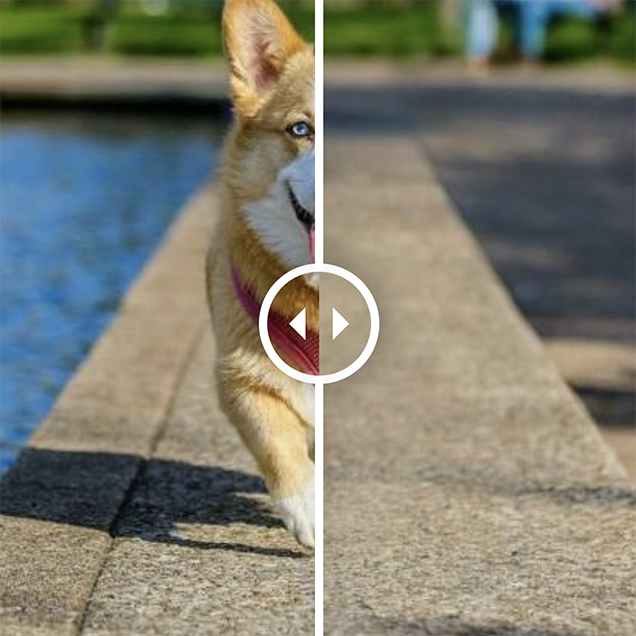

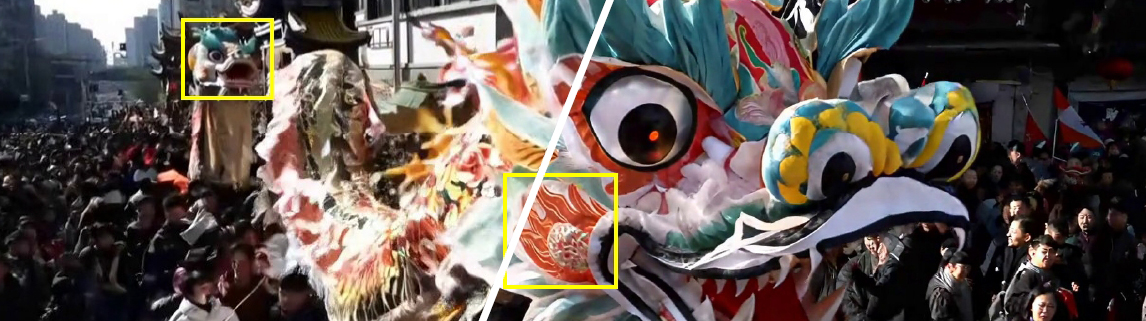

Generative AI for Vision

We build models for high-fidelity and controllable visual generation and editing, covering image restoration, super-resolution, video restoration, video translation, inpainting, matting, and language-guided editing. A central theme is integrating strong generative priors with structure and controllability, enabling outputs that are not only visually compelling but also stable, editable, and practical.

Spatial Intelligence

We investigate models that understand and generate geometry, appearance, and motion in 3D. Our work includes large-scale 3D generation, multi-view consistency, neural representations, and joint understanding-generation frameworks. We aim to bridge 2D perception and 3D reasoning to enable scalable creation and manipulation of immersive visual environments.

Efficient and Deployable Generative AI

We study how to make modern generative models more efficient, stable, and deployable. This includes compact architectures, efficient diffusion systems, improved latent representations, and optimized pipelines for real-world applications.

Embodied AI

We explore embodied intelligence by developing models that connect perception, memory, and action. Our work investigates world models, vision-language-action (VLA) systems, and multimodal reasoning for long-horizon tasks. We are particularly interested in compositional and modular architectures that support goal-directed behavior, environment interaction, and continual adaptation in dynamic settings.

Research

Video and Demos

Selected Work

Multimodal AI

- SenseNova-U1: Unifying Multimodal Understanding and Generation with NEO-unify Architecture

By Haiwen Diao et al. - From Pixels to Words - Towards Native Vision-Language Primitives at Scale

By Haiwen Diao et al. - Thinking with Camera: A Unified Multimodal Model for Camera-Centric Understanding and Generation

By Kang Liao et al. - Harmonizing Visual Representations for Unified Multimodal Understanding and Generation

By Size Wu et al. - LMMs-Eval: Reality Check on the Evaluation of Large Multimodal Models

By LMMs-Eval Team - LLaVA-OneVision: Easy Visual Task Transfer

By Bo Li et al.